摘要

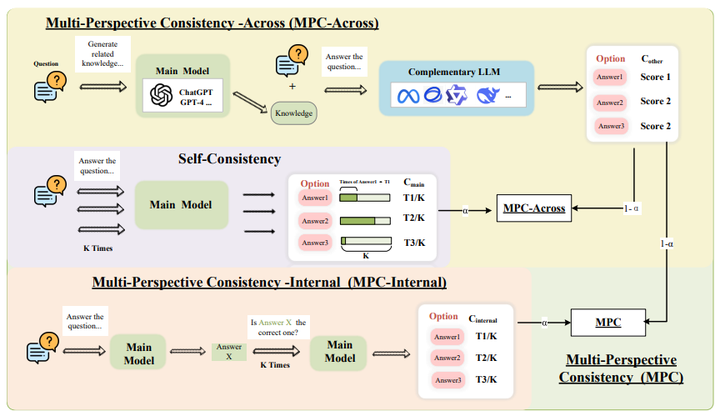

In the deployment of large language models (LLMs), accurate confidence estimation is critical for assessing the credibility of model predictions. However, existing methods often fail to overcome the issue of overconfidence on incorrect answers. In this work, we focus on improving the confidence estimation of large language models. Considering the fragility of self-awareness in language models, we introduce a Multi-Perspective Consistency (MPC) method. We leverage complementary insights from different perspectives within models (MPC-Internal) and across different models (MPC-Across) to mitigate the issue of overconfidence arising from a singular viewpoint. The experimental results on eight publicly available datasets show that our MPC achieves state-of-the-art performance. Further analyses indicate that MPC can mitigate the problem of overconfidence and is effectively scalable to other models.