DivTOD:Unleashing the Power of LLMs for Diversifying Task-Oriented Dialogue Representations

摘要

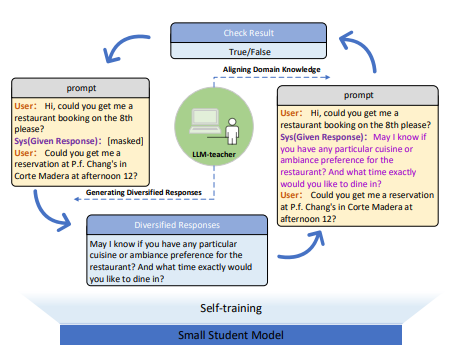

Language models pre-trained on general text have achieved impressive results in diverse fields. Yet, the distinct linguistic characteristics of task-oriented dialogues (TOD) compared to general text limit the practical utility of existing language models. Current task-oriented dialogue pre-training methods overlook the one-to-many property of conversations, where multiple responses can be appropriate given the same conversation context. In this paper, we propose a novel dialogue pre-training model called DivTOD, which collaborates with LLMs to learn diverse task-oriented dialogue representations. DivTOD guides LLMs in transferring diverse knowledge to smaller models while removing domain knowledge that contradicts task-oriented dialogues. Experiments show that our model outperforms strong TOD baselines on various downstream dialogue tasks and learns the intrinsic diversity of task-oriented dialogues.